| Remote Oriol Ferrer Mesià, Andy Cameron, Federico Urdaneta, Daniel Hirschmann |

5 November, 2005 |

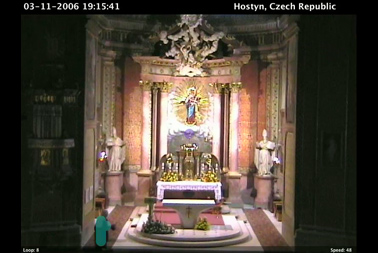

Other images: (Click to enlarge):

|

|

|

|

|

|

Remote is a visual and musical instrument based on real time and near real time input from global webcams. It is about sequence, repetition and musicality, both auditory and visual. It is generative in that it uses events out of the users control as the basis for animated musical loops. It is interactive in that the user can modulate the timing, length and combination of these loops to compose their own sequence in real time.

The sofware installation grabs images from a range of web cams around the world, saves the images to hard disk and then allows a player to play them back as looped sequences. The result is a time lapsed moving sequence of visual loops with a strong musical quality. In addition the playback software tracks changes between images in the sequence, formats them as MIDI and sends MIDI to Logic to create an auditory corollary of the visual sequence .

The playback interface allows the player to select which web cam is playing, change the number of frames in the loop and change the number of frames per second. For example, the player can select a web cam in New York's Times Square and change the loop from a sequence of 3 to 4 and then to 48. By selecting web cams in real time and changing their loop lengths, the player can jam with web cams from around the world.

As new images are downloaded and become available for sequencing, the installation presents a slowly evolving set of musical possibilities based on whatever is happening in front of the remote web cams. The installation thus balances generative and interactive models in a single audio visual artwork.

The installation comprises a network connected G5 dual processor Macintosh, projector, speakers and either a keyboard or custom button interface. The custom software is made up of two modules Collector which downloads new images and saves them onto the hard disk, and Remote which sequences the images. The installation also uses Logic and instruments from Garageband.

The currently installed version of Remote uses 4 instruments per web cam acoustic bass, two xylophones and a drum kit. The instruments are mapped across the 4 quadrants of the image top left, top right, bottom left, bottom right. Frame by frame changes in these quadrants will trigger notes from the instruments. Pitch information is generated depending on where the change between frames takes place along the vertical axis within a quadrant the higher the position of the change in the image, the higher the note generated. The tracking software generates coloured squares to indicate when a change has been detected and when a MIDI event generated. This further serves to underline the connection between visual and auditory musicality which Remote seeks to exemplify.

The system was developed at Fabrica during 2005. It was first seen in public as part of Heiner Goebbels opera Surrogate Cities at La Fenice, Venezia, 28 September 2005. It is now being exhibited at the Fabrica exhibition Ive been waiting for you at the Triad Gallery Seoul, as part of the 4th New Media Arts Biennale in Korea.

Programming: Oriol Ferrer Mesià

Sound Design: Federico Urdaneta, Martyn Ware, Asa Bennett

Idea and direction: Andy Cameron

Physical Interface: Daniel Hirschmann

See videos here and here

Other Projects:

FlipCast

Jun 6, 2006

MensaLady Widget

Sep 25, 2006

Fabrica Lapse

May 28, 2006

TimeLapse Screen Saver

Aug 1, 2006

Fabrica.it

Mar 10, 2005

HOME ENTERTAINMENT

An interactive mixed media installation by Fabrica artists exhibited in the windows of colette.

Jun 9, 2006

Kaleidoscope Screen Saver

Aug 1, 2006

TimeWarp

Mar 5, 2006

Fab's Answering Machine

Dec 20, 2005

Benettonplay!

May 1, 2006

Benetton Wool

Sep 6, 2006

FABRICA VIRTUALE

May 30, 2005

Istanbul Face

Sep 17, 2005

Text Face

Mar 5, 2006

Doodle Screen Saver

Mar 4, 2006

Benettonplay.com

Mar 31, 2006

Kaleidoscope

Feb 20, 2006

colorsmagazine.com

Apr 15, 2006

'Galleria': Interactive Installation for Giovanni Paolo Pannini exhibit.

Mar 1, 2005

netPong

Dec 1, 2006

Jun 6, 2006

MensaLady Widget

Sep 25, 2006

Fabrica Lapse

May 28, 2006

TimeLapse Screen Saver

Aug 1, 2006

Fabrica.it

Mar 10, 2005

HOME ENTERTAINMENT

An interactive mixed media installation by Fabrica artists exhibited in the windows of colette.

Jun 9, 2006

Kaleidoscope Screen Saver

Aug 1, 2006

TimeWarp

Mar 5, 2006

Fab's Answering Machine

Dec 20, 2005

Benettonplay!

May 1, 2006

Benetton Wool

Sep 6, 2006

FABRICA VIRTUALE

May 30, 2005

Istanbul Face

Sep 17, 2005

Text Face

Mar 5, 2006

Doodle Screen Saver

Mar 4, 2006

Benettonplay.com

Mar 31, 2006

Kaleidoscope

Feb 20, 2006

colorsmagazine.com

Apr 15, 2006

'Galleria': Interactive Installation for Giovanni Paolo Pannini exhibit.

Mar 1, 2005

netPong

Dec 1, 2006